For many years now, the virt-manager application has been the primary open source tool for managing virtual machines under libvirt for Fedora/Linux hosts, attempting to satisfy both server and desktop users alike, with the result that often neither userbase were really too happy. The decision to use libvirt as the foundation of OpenStack, OpenNebula and various other cloud projects has been a great validation of libvirt’s capabilities. More recently, the open sourcing of the RHEV-M product to create the oVirt community project, has seen another step forward for open source data center virtualization management based upon libvirt. Finally, with today’s very first release of GNOME Boxes, the same step forward is also happening for Linux desktop virtualization. No longer will desktop virtualization (or remote desktop access) feel like an afterthought, but rather it will be a seamless part of the GNOME-3 desktop experience. This is coming to a Fedora release near you soon….the target is Fedora 17.

What does this mean for virt-manager you might wonder ? Well first of all let me reassure people that virt-manager isn’t going away anytime in the forseeable future. There will always be people who prefer straightforward, directly controllable applications which do not try to impose clever policies on their usage. virt-manager, virsh, virt-install, etc all fill this gap and we don’t want to take that control away from people. With the growth in usage of OpenStack for cloud, oVirt for data center management, and GNOME Boxes for desktop virtualization, I think it is clear though, that virt-manager will have a diminished role / userbase in the future. I don’t consider this to be bad thing, on the contrary, it shows just how strong & diverse the open source virtualization community has become. Where once there was only virt-manager, today we have a wide choice of applications providing highly effective virtualization solutions targeted towards the needs of their respective userbases.

Part I of this posting, walked through the steps to create a iSCSI target and LUN on a QNAP server. Part II now considers provisioning guests using iSCSI storage from virt-manager.

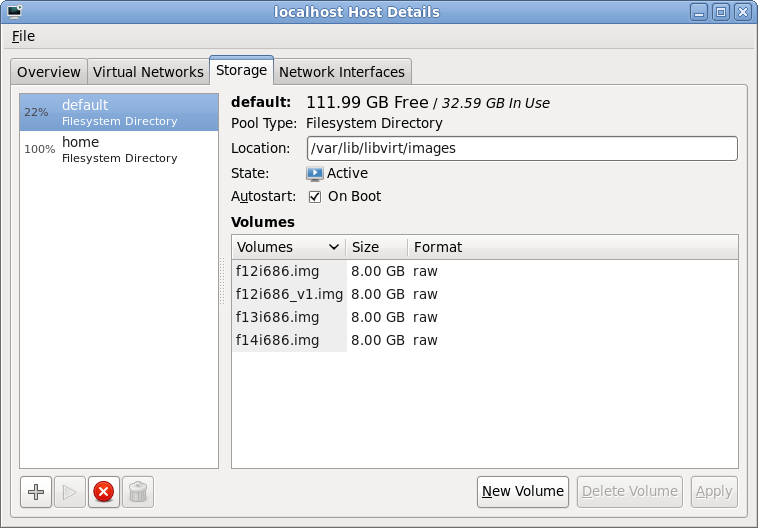

Storage pool management

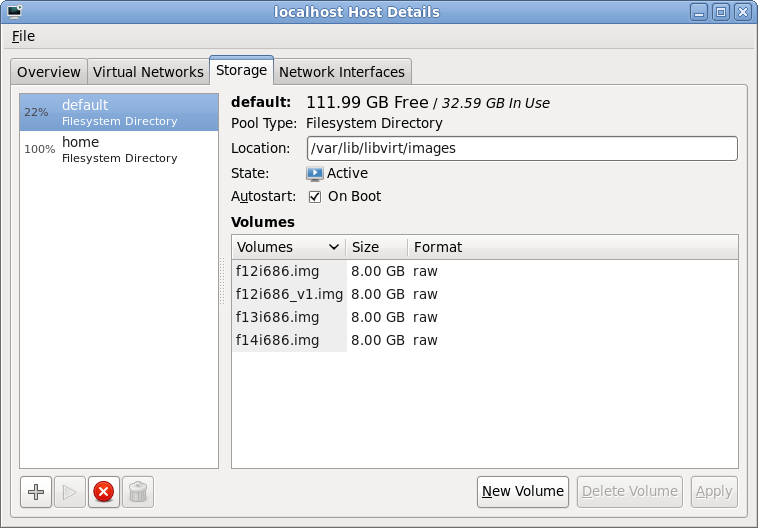

After launching virt-manager and connecting to the desired hypervisor, QEMU/KVM in my case, the first step is to open up the storage management pane. From the main virt-manager window, this can be found by selecting the menu “Edit -> Host Details” and then navigating to the “Storage” tab. The host shown here already has two storage pools configured, both pointing at local filesystem directories. The “default” storage pool will usually be visible on all libvirt hosts managed by virt-manager and lives in /var/lib/libvirt/images. This isn’t much use if you plan to migrate guests between machines, because some form of shared storage is required between the hosts. This is where iSCSI comes into the equation. To add a iSCSI storage pool, click the “+” button in the bottom-left of the window

Storage pool list

Adding a storage pool

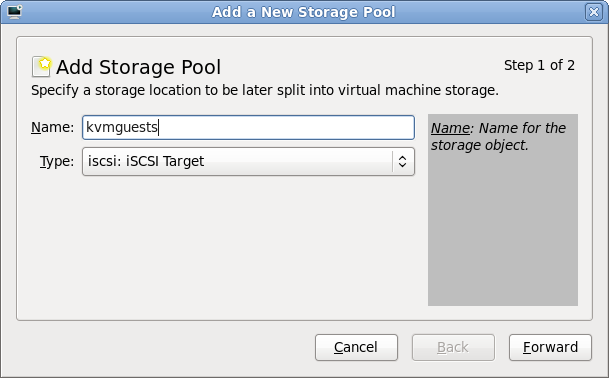

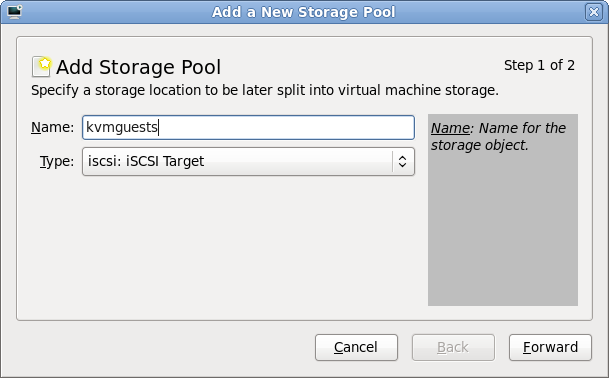

The first stage of setting up a storage pool is to provide a short name and select the type of storage to be accessed. For sake of consistency this example gives the storage pool the same name as the iSCSI target previously configured on the QNAP.

Storage pool creation

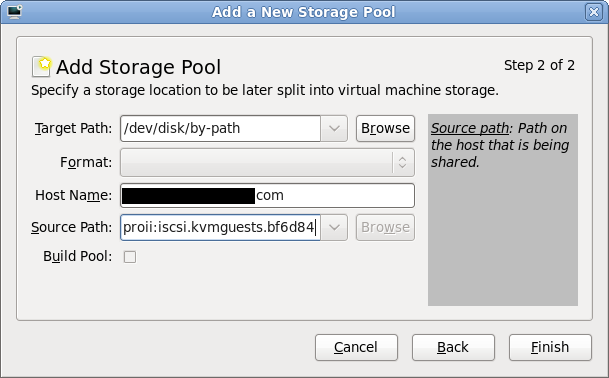

Entering iSCSI parameters

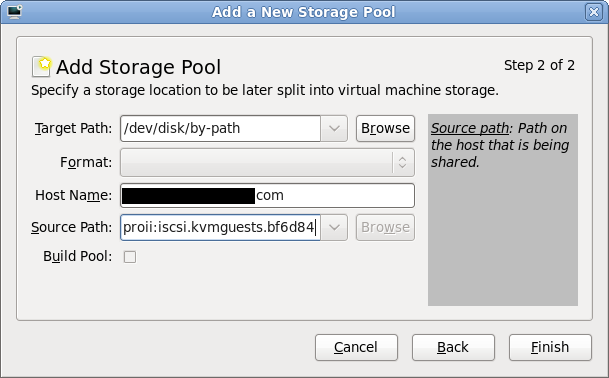

The “Target path” determines how libvirt will expose device paths for the pool. Paths like /dev/sda, /dev/sdb, etc are not a good choice because they are not stable across reboots, or across machines in a cluster, the names are assigned on a first come, first served basis by the kernel. Thus virt-manager helpfully suggests that you use paths under “/dev/disk/by-path”. This results in a naming scheme that will be stable across all machines. The “Host Name” is simply the fully qualified DNS name of the iSCSI server. Finally the “Source Path” is that adorable IQN seen earlier when creating the iSCSI target (“iqn.2004-04.com.qnap:ts-439proii:iscsi.kvmguests.bf6d84“)

iSCSI storage pool parameters

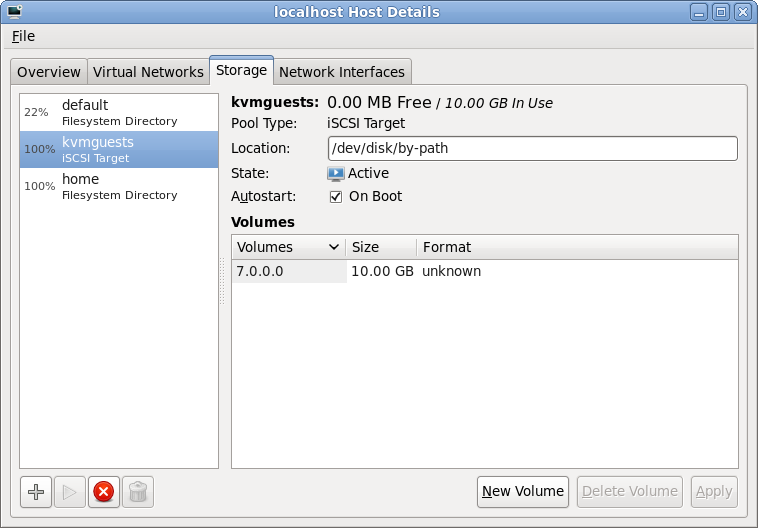

Browsing iSCSI LUNs

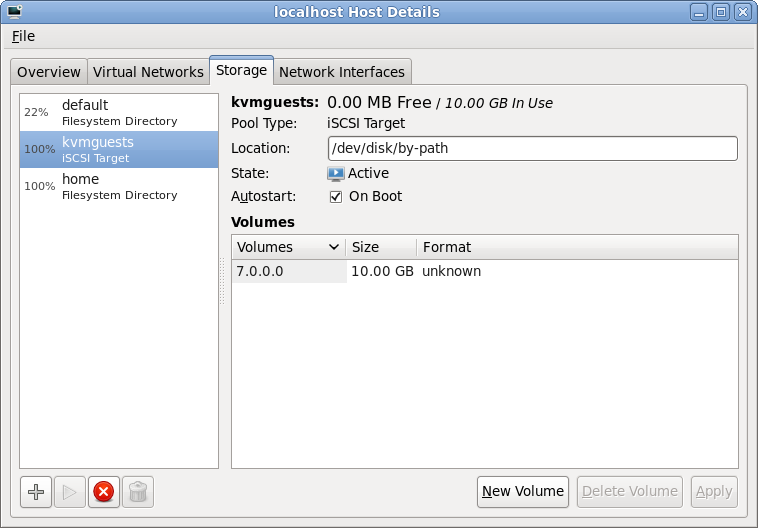

If all went to plan, libvirt connected to the iSCSI server and imported the LUNs associated with the iSCSI target specified in the wizard. Selecting the new storage pool, it should be possible to see the LUNs and their sizes. These steps can be repeated on other hosts if the intention is to migrate guests between machines. Obviously care should be taken to not run the same VM on two machines at once though. Ideally use clustering software to protect against this scenario.

iSCSI LUN browsing

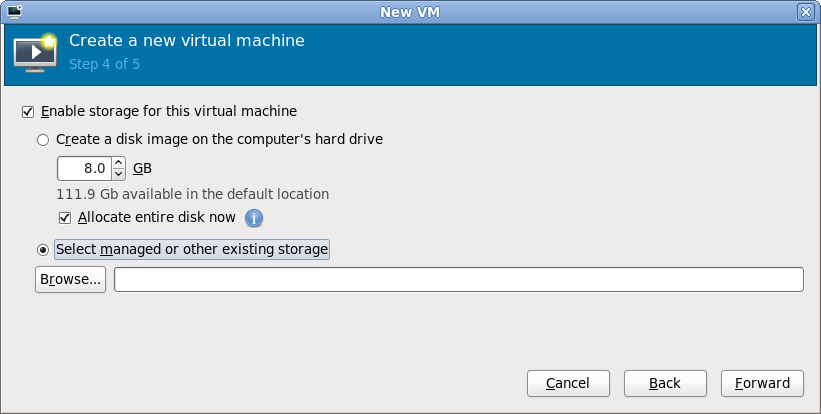

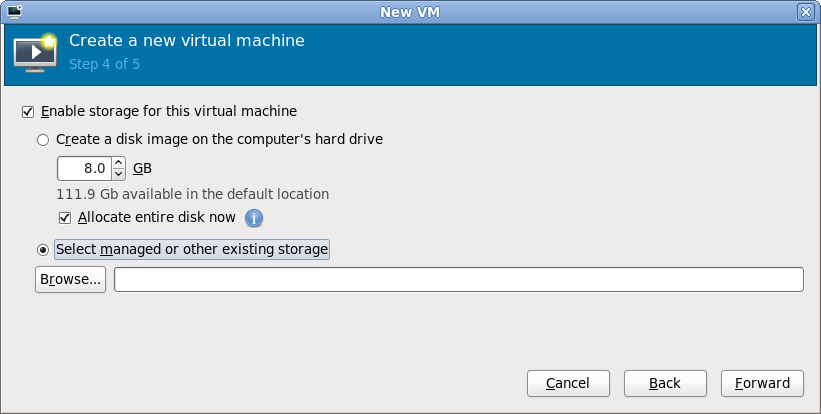

Selecting storage for a new VM

With the iSCSI storage pool configured in virt-manager, it is now possible to create a guest. After breezing through the first couple of steps in the “New VM wizard”, it is time to specify what storage to use for the new guest. By default virt-manager will allocate storage from the local filesystem. This isn’t what we want now, so go for the “Select managed or other existing storage” option and hit the “Browse” button

New guest storage selection

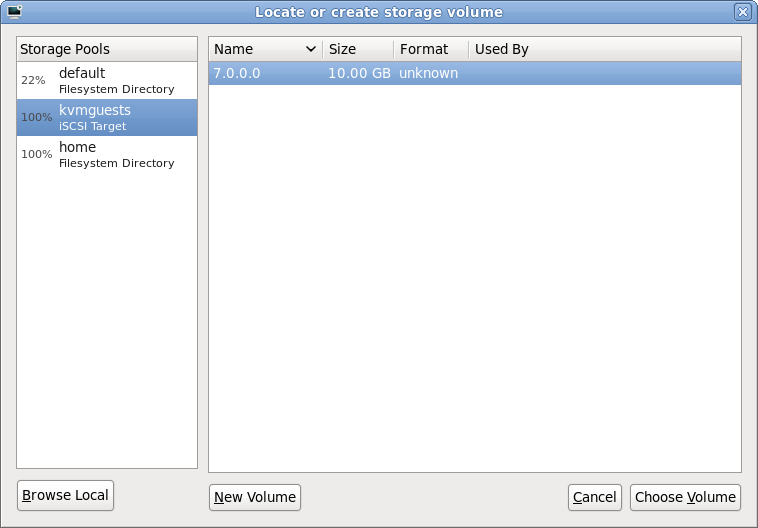

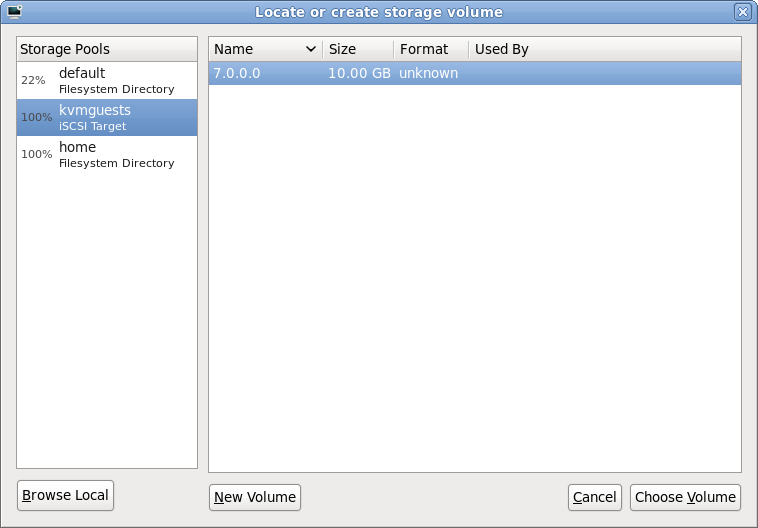

Browsing iSCSI LUNs for the new VM

The dialog that has appeared shows all the storage pools this libvirt connection knows about. This should match the pools seen a short while ago when configuring the iSCSI storage pool. It should be fairly obvious what todo at this point, select the iSCSI storage pool and the desired LUN (volume) within it and press “Choose Volume”

iSCSI LUN selection

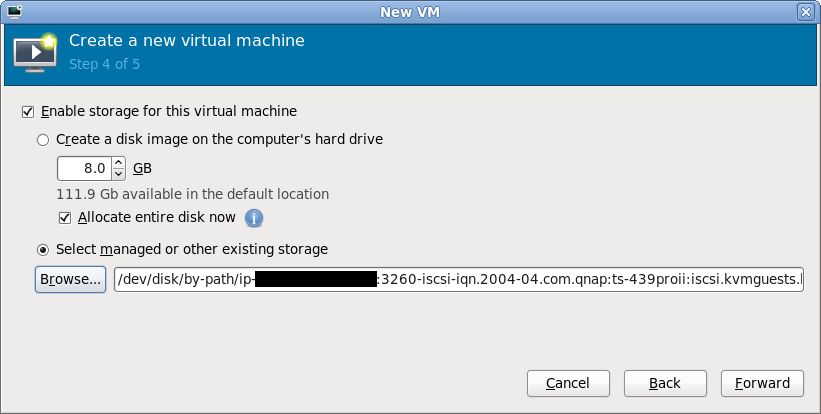

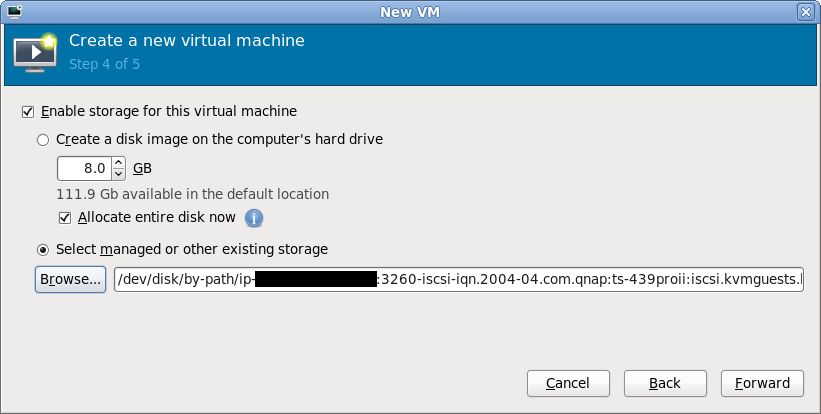

Continuing the new VM wizard

After choosing an iSCSI LUN, its path should now be displayed. It’ll be a rather long and scary looking path under /dev/disk/by-path, don’t stare at it for too long. Just continue with the rest of the new VM wizard in the normal manner and the guest will shortly be up & running using iSCSI storage for its virtual disk.

New guest storage path

Final words

Hopefully this quick walkthrough has shown that provisioning KVM guests on Fedora 12 using iSCSI is as easy as 1..2..3.. The hard bit is probably going to be on your iSCSI server if you don’t have a NAS with a nice administrative interface like the QNAP’s. The libvirt storage pool management architecture includes support for actually constructing storage pools & allocating LUNs. For a LVM storage pool libvirt would do this using vgcreate and lvcreate respectively. The iSCSI protocol standard does not support these kinds of operations but many vendors provide ways todo this with custom APIs, for example, Dell EqualLogic iSCSI arrays have a SSH command shell that can be used for LUN creation/deletion. It would be desirable to add support for these vendor specific APIs for LUN creation/deletion in libvirt. It could even be possible to support iSCSI target creation from libvirt if suitable APIs were available. This would dramatically simplify the steps required to provision new guests on iSCSI by enabling everything to be done from virt-manager, without the need to touch the NAS admin interfaces.